Don't miss the latest stories

AI-Created Faces Can Be Reverse-Engineered To Expose True Faces They Trained On

By Mikelle Leow, 12 Oct 2021

Subscribe to newsletter

Like us on Facebook

A collection of AI-generated faces. Photo 194752647 © Max Mahey | Dreamstime.com

“Fake” faces might not be so fake after all. In response to a popular website called ‘This Person Does Not Exist’, where people can obtain photos of non-existent people dreamed up by artificial intelligence without stumbling into copyright issues, a team of scientists has conducted a study they call ‘This Person (Probably) Exists’ to reveal actual human faces that such tools were trained on.

As detailed in their paper, many of the AI-generated results closely resemble the faces in human photos fed to generative adversarial networks (GANs), meaning that it’s possible to unmask the people behind the AI “photos,” MIT Technology Review reports. After all, algorithms learn what human faces look like based on these images.

To embark on this process, a team at the University of Caen Normandy led by Ryan Webster observed how differently a model would treat all-new, unseen data and data it has been trained with, which it has likely worked with thousands of times over.

Next up, they created a model that would identify cues differentiating the AI’s reactions to familiar and unseen images, and then taught it to use those signifiers to predict if an image belonged to a training set. It’s not too difficult to pick up if a model is looking at data it hasn’t seen before; it might be able to name a particular object, for instance, but with less confidence than from a familiar set of data.

Going even further, the group not only identified the original human photos that inspired the AI versions, but also managed to track down non-identical photos that seemed to feature the same people with the help of a separate face-recognition AI. You can view some before and after faces here.

The worry is that attackers could get into AI data and obtain information that scientists might have assumed had already been overwritten by AI touches. For example, third parties could get into a model trained on medical data and trace it back to actual people with an illness.

However, Webster also shared the other side of the coin, justifying that artists can use this method to see if their work had been used to train an AI without their knowledge. “You could use a method such as ours for evidence of copyright infringement,” he added. Additionally, researchers can run their projects through such systems to verify that results aren’t too similar to the training set.

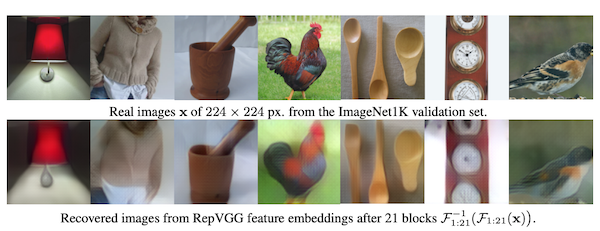

For instances where a person has no access to training data, Jan Kautz, vice president of learning and perception research at Nvidia, and his coworkers have developed an algorithm that attempts to retrace all the layers a model would go through to identify an image. By interrupting the model midway and reversing its direction, the AI was able to closely mimic input images from popular dataset ImageNet:

Photos from the ImageNet dataset (above) VS images reverse-engineered by AI. Screenshot via Dong et al

The Nvidia team hopes to explore methods to stop algorithms from exposing confidential data.

[via MIT Technology Review, cover photo 194752647 © Max Mahey | Dreamstime.com]

Receive interesting stories like this one in your inbox

Also check out these recent news